Unde aether sidera pascit? — From where would the sky feed the stars?

Lucretius

When language itself comes under threat—narrowed by external constraints or stripped of its generative power—a (re)turn to poetics becomes an act of relational resistance. A poetics of relationality emerges as a living collaboratory between time, place, and self, leading from reflection to becoming to action. For me, this lexical path first surfaced in the moss-soft forests of Himmelbjerget in Denmark, where nature, art, and spirit dance together—from the roots of Danish democracy to Heimdall’s home, to the vibrancy of Himmelbjerggården.

It continued in the chaparral woodlands near my home in Arizona, where manzanita, oak, and the occasional alligator juniper stand as sentinels. There, among their interwoven forms, I found both solace and provocation in the shifting colours of emergent spring. I reached toward what I know: those rare and intimate moments when the human and more-than-human entwine in shared presence.

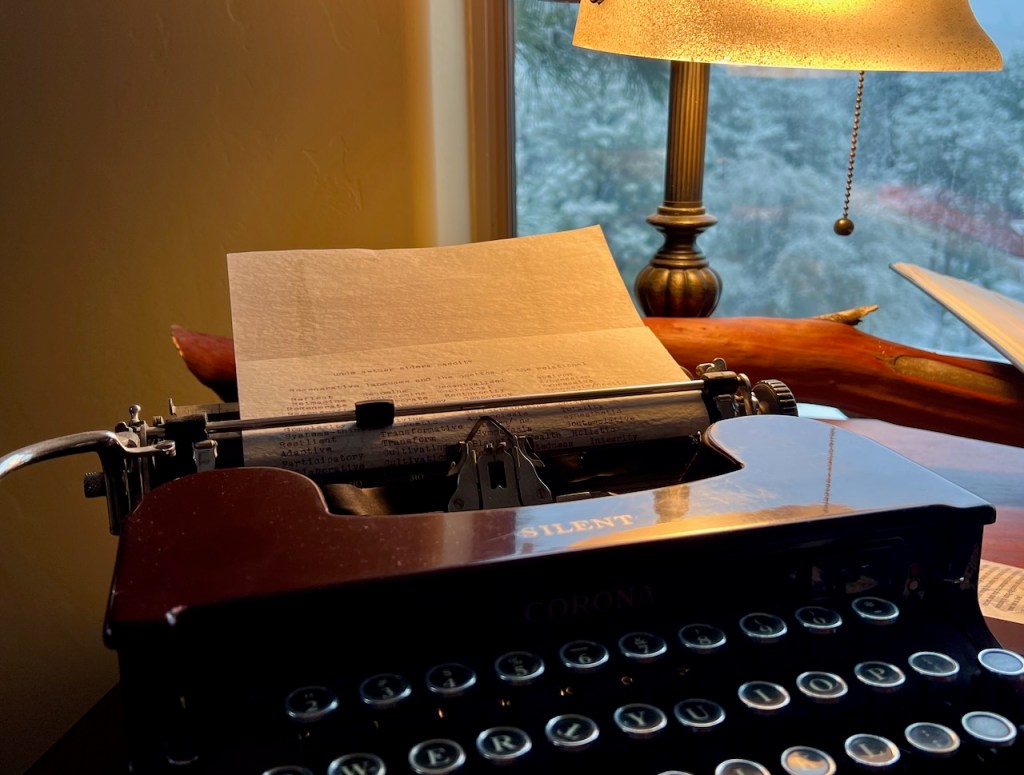

This is a day for calligraphies and choreographies of possibility, where language doesn’t describe the world but co-creates it. From manzanita-infused ink teasing radicles of meaning through translucent typing paper to laptop, the layered materiality of inscription imbues these words with generative power.

I find grounding in the stillness Thomas Merton names, where the mountain is SEEN only after one consents to the impossible paradox: it is and is not. And then—another opening:

“The ‘new consciousness’ reading the calligraphy of snow and rock from the air. A sign of snow on a mountainside as if my ancestors were hailing me… we burst into secrets.”

This new consciousness is not about transcendence but rather about intimacy: an embodiment across place, self, and more-than-human life. It is not language as mastery, but as invitation. The following lexicon is offered in that spirit—an incomplete, living gesture toward what might yet emerge when we let meaning root, drift, and move with the world.

| Reflect | Becoming | Decentralised | Apeiron |

| Reimagine | Co-becoming | Ecological | Expression |

| Regenerate | Collaborate | Reciprocity | Expressive |

| Regeneration | Collaboratory | Democracy | Synnoesis/noetic |

| Regenerative | Relational | Flourishing | Dialogic |

| Generate | Relationality | Flourish | Interspecies |

| Complexity | Evolving | Self-organising | Unfixed |

| Systems-thinking | Poetic | Autopoiesis | Totality |

| Resilient | Transformative | Learning | Synesthetic |

| Adaptive | Transform | Sympoiesis | Contemplative |

| Participatory | Cultivating | Planetary Health | Holistic |

| Collaborative | Cultivation | Interconnectedness | Integrity |

| Co-creative | Civic engagement | Experiential | Poetic-knowing |

| Emergent / Emergence | Distributed | Transdisciplinary | Relational grace |

| Soul-making | Sahaj | Composting | Bildung |

| Embodimennt | Poetic | Spirit / Spiritual / ity | Inner radiance |

| Art | Commons | Sovereignty | Craft |

| Mobility | Flow | Reciprocity | Movement |

| (Re)compose | Drift | Kinesis | Awareness |

| Breath / Breathe | Sense / Sensation | Circulate / Circulation | Assembly |

| Assemblage | Expression | Action | Somatic |

| Deterritorialisation | Tactile | Rhizome | Radical |

| Courage | Hope | Invite | Become |

l’amor che move il sole a l’atter stelle.the love that moves the sun and other stars.

Dante Alighieri